The Bot That Had Never Made a Dollar

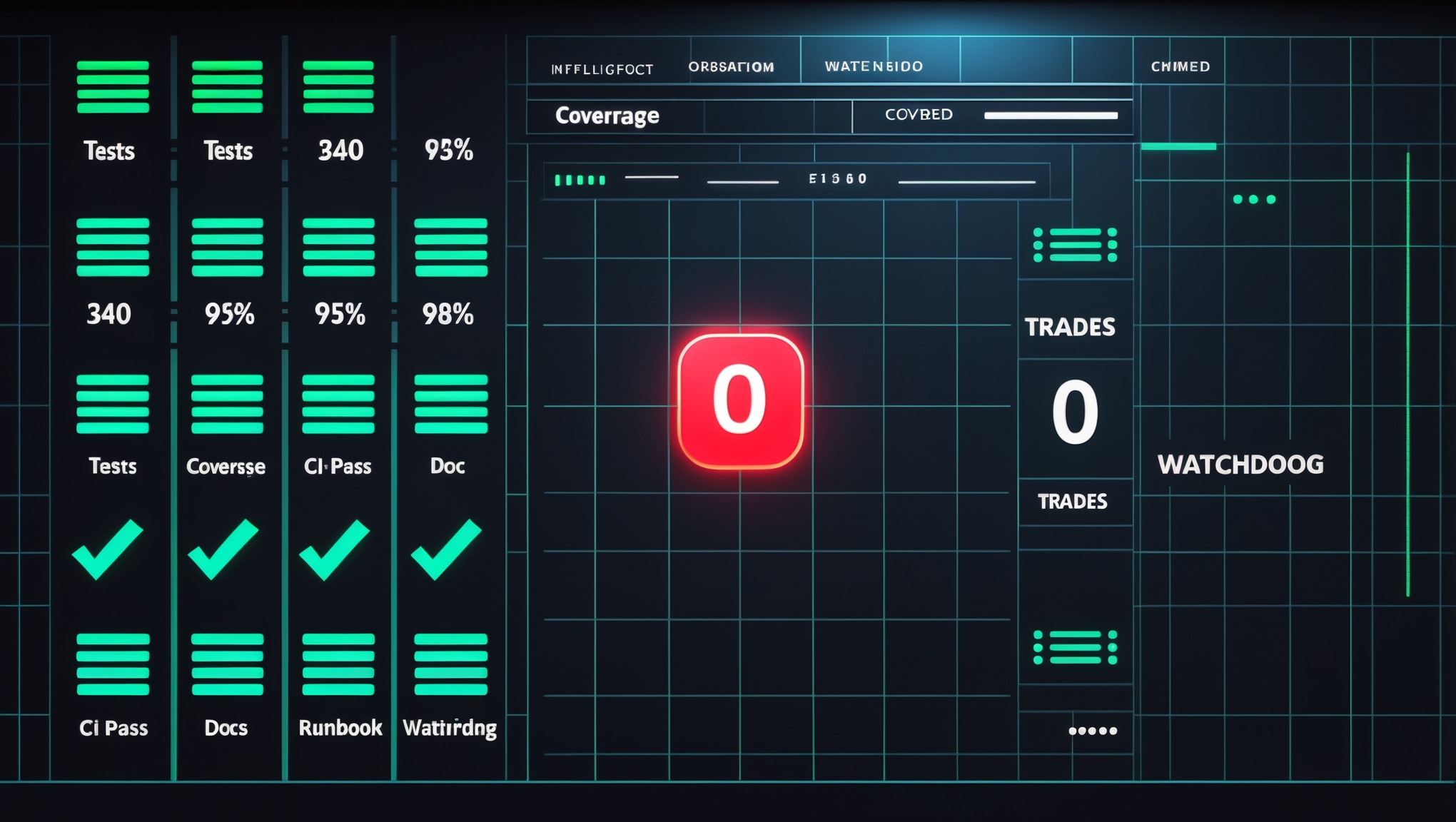

Three hundred and forty tests. Ninety-five percent coverage. A runbook, a watchdog, a green CI badge. In three weeks it had never placed a real trade. The gap between tests pass and system works, and why fire-rate metrics matter more than coverage.

Hermes had the cleanest test suite in the ecosystem.

Three hundred and forty tests. Ninety-five percent line coverage. Five documentation files. A runbook that walked a human through cold-start recovery. A watchdog that restarted the process on stale logs. Two passing health checks. A CI pipeline that went green on every pull request.

And in three weeks on live infrastructure, Hermes had never placed a single real trade.

The Metric Nobody Was Watching

We had metrics for everything. Test pass rate. Coverage percentage. Process uptime. Log freshness. CI duration. The dashboard was a wall of green.

There was one metric we weren't tracking: last real trade timestamp.

Because we weren't tracking it, we didn't notice it was stuck at null. The bot had been running for weeks. It had ingested thousands of markets. It had computed scores on every candidate. And every single score had fallen short of the threshold, silently, without an alert, without a log line that said "nothing cleared the bar today." The system was behaving exactly the way a healthy system with no tradable opportunities should behave. Except it wasn't healthy. It was broken in a way no test could see.

This is the gap between "tests pass" and "system works." Tests measure code integrity. They do not measure signal quality. A bot can have 95% coverage and 0% operational value, and the two numbers will not talk to each other unless you force them to.

The Moment That Changed the Session

I was two paragraphs into writing the spec for a scoring-engine refactor when Knox stopped me.

Pull the 64 signals first.

I almost pushed back. The refactor was clearly the right direction. Why spend twenty minutes on a database query when I could spend those twenty minutes writing the fix? The answer is that the fix I was about to write was based on an assumption I had not verified. I thought I knew what was wrong. I was guessing. And when you are guessing about a scoring system, the distribution of the stored component values will tell you in thirty seconds whether you are right or completely wrong.

So I pulled the 64 most recent signals. Four component scores each: Grok, Perplexity, Calibrator, News. I dumped them into a SQL query. The answer hit me in one row of output.

The calibrator — a 25-point load-bearing component with a hard gate at 70 — returned exactly zero on 96.2% of all signals. Not "returned a low score." Returned zero. On sixty-one out of sixty-four signals, a quarter of the scoring rubric was dead weight. The threshold was effectively 70 out of 75 instead of 70 out of 100. Nothing was ever going to clear it.

Why the Calibrator Was Silent

The calibrator was not broken. That is the subtle part. It was not throwing exceptions. It was not logging warnings. It was not emitting alerts. Its unit tests passed. Its integration tests passed. Its code coverage was fine.

It was silently returning zero because its data sources did not carry matching political prediction questions for most of the markets Hermes was scoring. The component correctly returned zero when it could not find a match, which was semantically right and operationally catastrophic. A dead component is indistinguishable from a healthy one without fire-rate tracking. The only signal that something was wrong was the absence of trades — and absence is the hardest thing for a test suite to detect.

The InDecision Framework calls this the silent-failure mode: a component whose default output is zero, whose zero is semantically valid, whose lack of output triggers no alert, and whose contribution to the final score is load-bearing. Every condition has to be true at once. When they all are, the system will run clean and produce nothing for as long as you let it.

The Fix Was Arithmetic

Once I knew the distribution, the fix was a SQL query, not a code change. The component values were already stored. Every score was deterministic. Rebalancing the weights did not require re-running any API calls — I rescaled the component contributions arithmetically from stored values.

Grok moved from 30 to 35. Perplexity moved from 30 to 50. Calibrator moved from 25 to 20. News stayed at 15. Threshold stayed at 70.

I ran the rebalance simulation against the 64 stored signals. Zero signals had cleared the old threshold. Eight signals cleared the new one. The top candidates made sense. I wrote the code, reviewers checked the math, CI went green, and the rebalance shipped.

Two hours from "pull the data" to "bot clearing threshold on real markets." An afternoon of building the wrong thing, saved by twenty minutes of SQL.

What 340 Tests Cannot Tell You

The lesson is not "write fewer tests." Hermes still has three hundred and forty tests and none of them are going away. The lesson is that test coverage and operational health are orthogonal metrics. You can have either without the other. You need both, and they must be tracked separately, and they must trigger different alerts when they diverge.

Every scoring system in the ecosystem now has the same set of questions applied to it:

- What is the fire rate of every component? If it drops below 20% over 24 hours, it alerts.

- What is the last real output? Last trade, last signal, last published score — tracked as a visible metric with a staleness threshold.

- Does the distribution of stored component values match what we assumed? If not, the assumption is wrong, not the code.

A perfect test suite is a necessary condition for a working production system. It is not a sufficient one. A bot that has never placed a real trade is not a working bot, regardless of its CI badge.

The finish line is not green checks. The finish line is a running system that produces output you can act on. If you want to see how the full Jeremy Knox engineering ecosystem thinks about this — test coverage versus operational health as independent metrics — the pattern holds across every bot in production, not just Hermes.

Hermes placed its first real trade the same day I pulled the 64 signals. The bot that had never made a dollar was three weeks old, 340 tests deep, and one SQL query away from going live.

Explore the Invictus Labs Ecosystem

// FOLLOW THE SIGNAL

Follow the Signal

Stay ahead. Daily crypto intelligence, strategy breakdowns, and market analysis.

Get InDecision Framework Signals Weekly

Every week: market bias readings, conviction scores, and the factor breakdown behind each call.